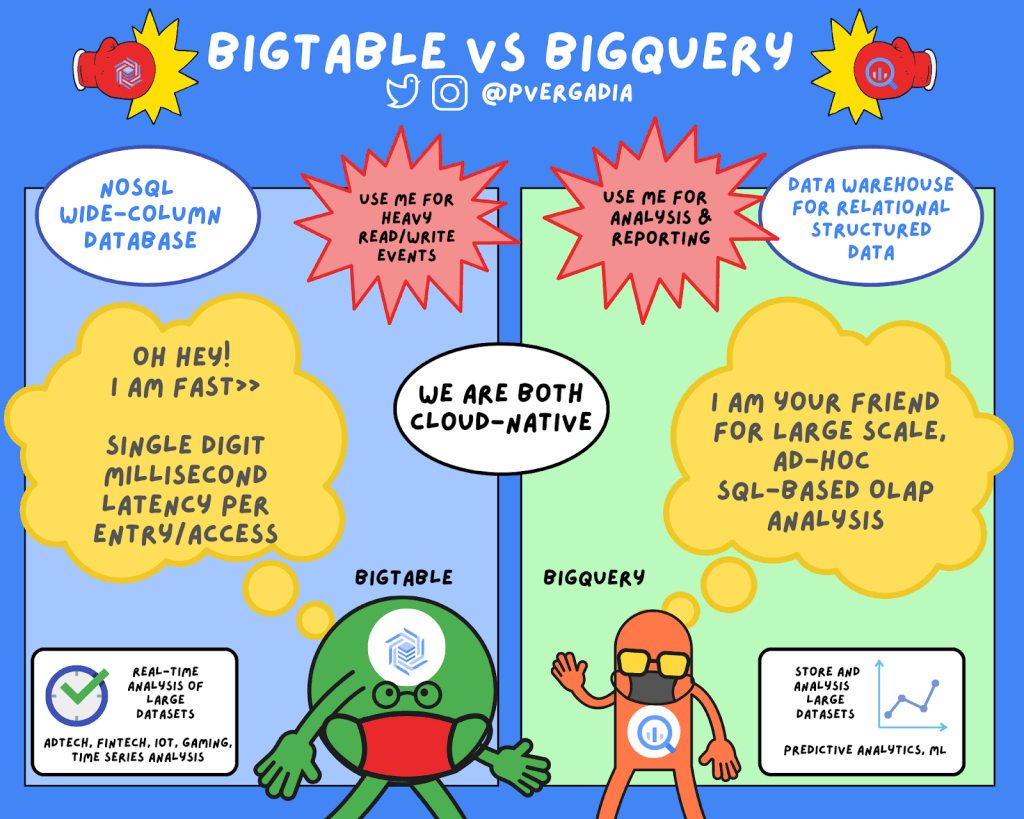

Bigtable vs BigQuery

Syah Ismail2021-11-03T09:49:07+08:00Last week, we took a look at Cloud Bigtable. For those who know a thing or two about big data, you may wonder how is Bigtable different from BigQuery. While these two services have several similarities, including “Big” in their names, they support very different use cases in your big data ecosystem.

At a high level, Bigtable is a NoSQL wide-column database. It’s optimized for low latency, large numbers of reads and writes, and maintaining performance at scale. Bigtable use cases are of a certain scale or throughput with strict latency requirements, such as IoT, AdTech, FinTech, and so on. If high throughput and low latency at scale are not priorities for you, then another NoSQL database like Firestore might be a better fit.

On the other hand, BigQuery is an enterprise data warehouse for large amounts of relational structured data. It is optimized for large-scale, ad-hoc SQL-based analysis and reporting, which makes it best suited for gaining organizational insights. You can even use BigQuery to analyze data from Cloud Bigtable.

Characteristics of Cloud Bigtable

Bigtable is a NoSQL database that is designed to support large, scalable applications. Use Bigtable when you are making an application that needs to scale in a big way in terms of reads and writes per second. Bigtable throughput can be adjusted by adding/removing nodes – each node provides up to 10,000 queries per second (read and write). You can use Bigtable as the storage engine for large-scale, low-latency applications as well as throughput-intensive data processing and analytics. It offers high availability with an SLA of 99.5% for zonal instances. It’s strongly consistent in a single cluster; replication adds eventual consistency across two clusters and increases SLA to 99.99%.

Cloud Bigtable is a key-value store that is designed as a sparsely populated table. It can scale to billions of rows and thousands of columns, enabling you to store terabytes or even petabytes of data. This design also helps store large amounts of data per row or per item, making it great for machine learning predictions. It is an ideal data source for MapReduce-style operations and integrates easily with existing big data tools such as Hadoop, Dataflow, and Dataproc. It also supports the open-source HBase API standard to easily integrate with the Apache ecosystem.

Characteristics of BigQuery

BigQuery is a petabyte-scale data warehouse designed to ingest, store, analyze, and visualize data with ease. Typically, you’ll collect large amounts of data from across your databases and other third-party systems to answer specific questions. You can ingest this data into BigQuery by uploading it in a batch or by streaming data directly to enable real-time insights. BigQuery supports a standard SQL dialect that is ANSI-compliant, so you are all set if you already know SQL. It is safe to say that you would serve an application that uses Bigtable as the database, but you wouldn’t have applications performing BigQuery queries most of the time. Cloud Bigtable shines in the serving path and BigQuery shines in analytics.

Once your data is in BigQuery, you can start performing queries on it. BigQuery is a great choice when your queries require you to scan a large table or you need to look across the entire dataset. This can include queries such as sums, averages, counts, groupings, or even queries for creating machine learning models. Typical BigQuery use cases include large-scale storage and analysis or online analytical processing (OLAP).

Common characteristics

BigQuery and Bigtable are both cloud-native and they both feature unique, industry-leading SLAs. Since updates and upgrades happen transparently behind the scenes, you don’t have to worry about maintenance windows or planning downtime for either service. In addition, they offer unlimited scale, automatic sharding, and automatic failure recovery (with replication). For fast transactions and faster querying, both BigQuery and Bigtable separate processing and storage, which helps maximize throughput.